PromptFoo: Secure AI Evaluation & Red Teaming Toolkit

Comprehensive Overview of PromptFoo: A Robust CLI and Library for Evaluating and Red-Teaming Large Language Models (LLMs)

Introduction to PromptFoo

PromptFoo is a cutting-edge command-line interface (CLI) tool and open-source library designed specifically for evaluating, red-teaming, and securing large language model (LLM) applications. Unlike traditional trial-and-error approaches in AI development, PromptFoo streamlines the process of building secure, reliable, and high-performance LLM-based systems by automating evaluations, vulnerability scanning, and comparative analysis across multiple models.

The tool is particularly valuable for developers, security researchers, and organizations looking to ensure that their AI applications are not only functionally robust but also resistant to adversarial attacks. By leveraging PromptFoo, teams can eliminate guesswork in prompt engineering, reduce risks associated with LLM misuse, and optimize performance metrics before deployment.

Key Features and Capabilities

1. Automated Evaluations for Prompts and Models

PromptFoo excels at automating the evaluation of prompts and models to ensure they meet predefined criteria for accuracy, reliability, and security. Users can test their LLM applications against a variety of scenarios, including:

- Accuracy Testing: Verifying that responses align with expected outputs.

- Consistency Checks: Ensuring responses remain coherent across repeated queries.

- Adversarial Robustness: Evaluating how well models handle malicious or deceptive inputs.

The tool integrates seamlessly with popular LLM providers such as OpenAI, Anthropic, Azure AI, and others, allowing users to compare performance metrics side-by-side. This comparative analysis helps developers fine-tune their models for optimal results while maintaining security standards.

2. Red-Teaming and Vulnerability Scanning

One of the most critical aspects of PromptFoo is its ability to perform red-teaming exercises—essentially, simulating attacks on LLM applications to identify vulnerabilities before they are exploited by malicious actors. This includes:

- Prompt Injection Attacks: Testing whether prompts can manipulate model responses.

- Hallucination Detection: Identifying instances where models generate false or nonsensical information.

- Privacy Leakage Checks: Ensuring sensitive data remains confidential within LLM interactions.

By running these red-team exercises, developers can proactively patch vulnerabilities and strengthen their AI systems against potential breaches. The results are presented in detailed vulnerability reports, making it easy to prioritize fixes and improve security posture.

3. Local Execution Without Data Leakage

Unlike many LLM evaluation tools that require cloud-based execution, PromptFoo operates entirely locally on the user’s machine. This ensures:

- Privacy Protection: No sensitive prompts or model responses leave the developer’s environment.

- Offline Capability: Users can evaluate models without relying on external APIs, reducing dependency on third-party services.

This feature is particularly beneficial for organizations handling classified information or complying with strict data protection regulations such as GDPR and CCPA.

4. Integration with CI/CD Pipelines

PromptFoo seamlessly integrates into continuous integration/continuous deployment (CI/CD) workflows, allowing teams to automate security checks during the development process. This includes:

- Automated Pull Request Reviews: Scanning code for LLM-related vulnerabilities before merging changes.

- Pre-Commit Hooks: Running evaluations as part of the build process to catch issues early.

- Customizable Checkpoints: Setting up automated tests that trigger on specific events, such as new model updates or prompt modifications.

By embedding PromptFoo into CI/CD pipelines, teams can ensure that every iteration of their LLM applications undergoes rigorous scrutiny, reducing the risk of deployment failures due to security flaws.

5. Cross-Model Comparison

PromptFoo supports a wide range of LLM providers, enabling users to compare performance metrics across different models in real time. This includes:

- OpenAI (GPT-3/4, ChatGPT)

- Anthropic (Claude)

- Azure AI

- AWS Bedrock

- Ollama and other local LLMs

By running evaluations against multiple models simultaneously, developers can identify which model best suits their specific use case while ensuring it meets security and reliability benchmarks.

User Experience: Getting Started with PromptFoo

Installation Options

PromptFoo is available through multiple installation methods to accommodate different user preferences:

- npm:

npm install -g promptfoo - Homebrew (macOS):

brew install promptfoo - pip:

pip install promptfoo - npx:

npx promptfoo@latestfor temporary usage without installation

For developers working with Node.js, the library can also be imported directly into their projects:

const { PromptFoo } = require('promptfoo');

Setting Up an API Key

Most LLM providers require authentication via an API key. Users can set this up in their environment variables:

export OPENAI_API_KEY=sk-abc123

This ensures that PromptFoo interacts securely with external APIs while maintaining local privacy.

Running Initial Evaluations

To begin evaluating a prompt or model, users follow these steps:

- Navigate to the example directory:

cd getting-started

- Initialize PromptFoo for evaluation:

promptfoo init --example getting-started

- Execute an evaluation and view results:

promptfoo eval

promptfoo view

The tool provides a user-friendly interface for viewing results, including performance metrics, security findings, and comparative analysis.

Visualizing PromptFoo in Action

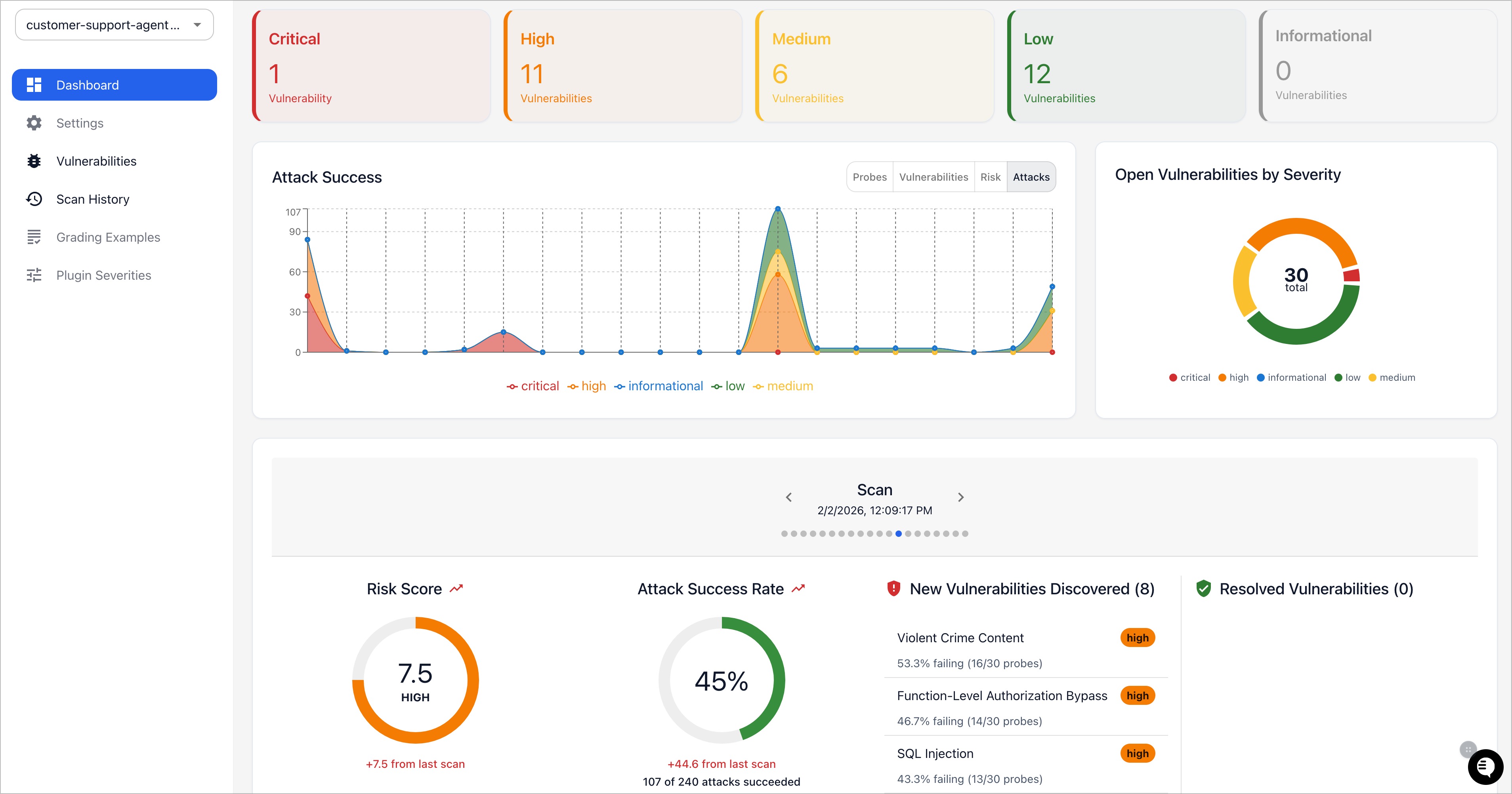

Web-Based Evaluation Dashboard

PromptFoo’s web-based viewer allows users to visualize evaluations across multiple models. For example, the dashboard might display a comparison between GPT-4 and Claude, highlighting differences in accuracy, response coherence, and adversarial robustness.

This graphical representation helps developers quickly identify which model performs best under various conditions while ensuring security compliance.

Command-Line Interface (CLI) Workflow

For users who prefer working in the terminal, PromptFoo offers a streamlined CLI experience. The tool supports features like:

- Live Reload: Automatically updates results as prompts are modified.

- Caching: Stores evaluation results for faster subsequent runs.

This efficiency reduces the time required to iterate on LLM applications, allowing developers to focus more on innovation rather than manual testing.

Security Vulnerability Reports

When conducting red-teaming exercises, PromptFoo generates detailed vulnerability reports that outline:

- Identified Risks: Such as prompt injection vulnerabilities or privacy leaks.

- Severity Levels: Classifying issues based on potential impact.

- Recommended Fixes: Actionable steps to mitigate identified risks.

These reports serve as a comprehensive guide for strengthening LLM applications against adversarial threats.

Why Choose PromptFoo?

Developer-First Approach

PromptFoo is designed with developers in mind, offering:

- Speed: Quick setup and execution times.

- Flexibility: Works across any LLM API or programming language.

- Performance Metrics: Data-driven insights to make informed decisions.

By eliminating guesswork, PromptFoo enables teams to build AI applications that are both functional and secure from the ground up.

Privacy and Security

Unlike cloud-based evaluation tools, PromptFoo ensures that all interactions remain private and local. This is critical for organizations handling sensitive data or complying with privacy regulations.

Battle-Tested in Production

PromptFoo has been used by teams serving millions of users in production environments. Its reliability and effectiveness have been validated through real-world deployments, making it a trusted tool for AI development.

Open Source Community Support

As an open-source project under the MIT license, PromptFoo benefits from:

- Active Contributions: A growing community contributing to its development.

- Community Discussions: Accessible via Discord and GitHub discussions.

- Contribution Guide: Detailed instructions on how to contribute to the project.

This collaborative approach ensures that PromptFoo continues to evolve based on user feedback and emerging security challenges in AI development.

Learning Resources

For users looking to dive deeper into PromptFoo’s capabilities, the following resources are available:

- Getting Started Guide – Basics of setting up and running evaluations.

- Red-Teaming Documentation – In-depth information on vulnerability scanning techniques.

- CLI Usage Guide – Detailed instructions for CLI commands.

- Supported Models – List of compatible LLM providers.

- Code Scanning Guide – Integrating PromptFoo into CI/CD pipelines.

Additionally, users can engage with the community via:

- Website: PromptFoo Official Site

- Discord Server: Join the Community

Conclusion

PromptFoo represents a significant advancement in AI development tools by combining automated evaluations, red-teaming capabilities, and local execution into a single, user-friendly platform. Whether developers are fine-tuning prompts for accuracy, securing LLM applications against adversarial attacks, or integrating security checks into CI/CD pipelines, PromptFoo provides the necessary tools to build robust and reliable AI systems.

By prioritizing privacy, flexibility, and performance metrics, PromptFoo empowers teams to ship secure, high-quality LLM applications without compromising on efficiency or innovation. As the field of AI continues to evolve, tools like PromptFoo will play a crucial role in ensuring that AI applications remain both effective and resilient against emerging threats.

This detailed description encapsulates the essence of PromptFoo’s functionality, user experience, and benefits while incorporating visual references from the provided input.

Enjoying this project?

Discover more amazing open-source projects on TechLogHub. We curate the best developer tools and projects.

Repository:https://github.com/promptfoo/promptfoo

GitHub - promptfoo/promptfoo: PromptFoo: Secure AI Evaluation & Red Teaming Toolkit

<h1 id="comprehensiveoverviewofpromptfooarobustcliandlibraryforevaluatingandredteaminglargelanguagemodelsllms"><strong>Comprehensive Overview of PromptFoo: A Ro...

github - promptfoo/promptfoo